How I turned a messy design file into an AI-ready system - using tools that didn’t exist and Claude Code

Context: when design grows faster than it can be maintained

For a long time, my design file felt less like a system and more like something that had survived a marathon. It worked, it shipped real products, and it delivered value, but internally it accumulated some of structural compromises.

I’m a solo product designer working with a team of around 15 people, and at one point I was responsible for two major products - a low-code platform and an AI generator - along with the marketing website and various internal tools, while also maintaining the design system. At that scale and speed, keeping everything perfectly structured simply wasn’t realistic.

We were constantly iterating - testing hypotheses, validating flows, trying new directions - and the file evolved as fast as the product itself. The issue was that it didn’t always have time to fully adapt after each change, so inconsistencies kept stacking up.

At its peak, the file contained around 22,000 component instances, and roughly 10–13% of them were broken, meaning their main components no longer existed. That’s thousands of silent inconsistencies, and fixing them manually wasn’t feasible.

Over time, this led to a growing gap between design and code, and more importantly, made the file increasingly unusable for automation.

The turning point came when I got access to the codebase through Codex. I could make small fixes directly, but more importantly, I realized I could build the tools I had been missing for years - and that’s where things really changed.

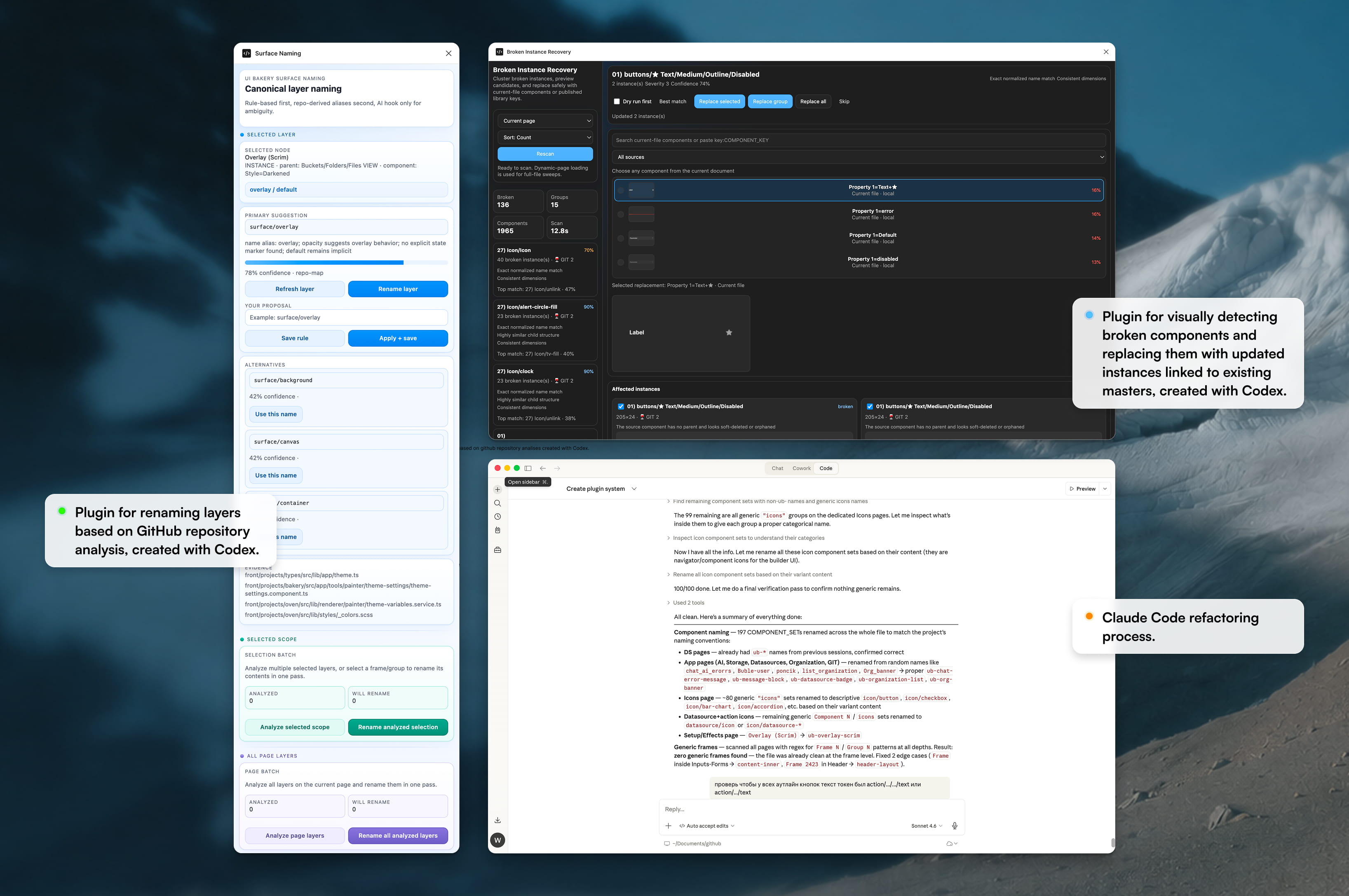

Plugin #1: fixing broken components at scale

One of the most persistent issues in the file were broken components - instances that were still being used across the product even though their source components had been removed or replaced during previous iterations.

I built a plugin that scans the entire file, specific pages, or just a selection, identifies these broken instances, and groups them in a way that’s easy to review. But more importantly, it doesn’t just detect problems - it enables you to fix them at scale.

Instead of manually going through thousands of instances, the plugin allows you to relink them to existing components, see a visual preview of the replacement, and use simple validation signals to estimate how accurate that replacement will be. You can also control exactly what gets replaced and what stays untouched, which makes the process safe and flexible.

In practice, this turned what used to be hours (or realistically, days) of manual cleanup into a process that takes minutes, and made it possible to fix structural issues that had been accumulating for years.

Plugin #2: aligning design with code through naming

The second issue was less visible but even more critical: naming. One of the biggest blockers for automation and AI workflows is the mismatch between how elements are named in design and how they are structured in code.

To address this, I analyzed our Git repository, extracted the naming conventions used by developers, and embedded those rules into a plugin. It can run on a selection, a page, or the entire file, and intelligently rename layers based on those conventions, while preserving meaningful, human-written names and only replacing noisy or generic ones like “Rectangle 123” or “Frame 456.”

This effectively created a bridge between design and code, aligning both sides without requiring manual effort and making the file significantly more structured and machine-readable.

#3: Claude Code connection

Cleaning up the Figma file was only part of the process. The real shift happened when I connected it to Claude Code via MCP and started treating the design system as structured input, not just a visual file.

Instead of manually auditing inconsistencies, I used Claude Code to analyze the system directly: find missing or broken token references, detect hardcoded values inside components, surface orphan styles, and identify inconsistencies across similar patterns. MCP made this possible by exposing Figma as structured data - layers, naming, relationships - not just pixels.

At the same time, I had already rebuilt a clean token architecture with Codex and synced it with GitHub. That became the source of truth. So instead of guessing what to fix, I could compare the existing Figma setup against the new system and systematically replace inconsistent values.

The workflow became straightforward: Figma → MCP → Claude Code for analysis, GitHub → Codex as the token source of truth, and Claude Code again to map issues back to the system and suggest fixes.

In practice, this turned cleanup from a manual, visual task into a semi-automated system refactoring process. Once Figma is connected through MCP, the design system stops being a static library and becomes something AI can actually reason about. At that point, the quality of your structure directly defines the quality of the output.

Results and reflection

This process completely changed how I think about design systems. Before, cleanup was manual, slow, and unreliable at scale. You could fix things locally, but it was hard to be confident the system was actually consistent. Tokens existed, but they weren’t fully enforced, and Figma was still mostly a visual tool.

After introducing MCP and connecting Figma to code, cleanup became partially automated. Instead of hunting issues, I could detect and fix them systematically. The design system became aligned with code through GitHub and Codex, and Figma turned into a machine-readable system rather than just a design file.

The biggest shift is in how the work feels. What used to take hours now takes minutes with AI support, and instead of fixing UI case by case, I’m fixing the underlying system that generates it.

At this point, the design system is no longer just documentation for humans - it becomes infrastructure that AI can use to build and evolve the product.